Self-Healing AI Agents: How Appifex Fixes 95% of Bugs Automatically

TechCrunch reported that the people who embrace AI the most are burning out the fastest. AI handles the boilerplate now, but most of that saved time goes straight to debugging the code it wrote. Build errors, missing dependencies, type mismatches, servers that won't start. Threads like Vibe coding is so expensive and v0 went from pure magic to a total scam describe the same pattern: the AI generates the problem and then you spend the next hour guiding it toward a fix.

The promise was more time on your product. The reality is most of that time goes to infrastructure babysitting. We built Appifex to fix that.

The error loop problem

Robert Herbig calls it the entropy loop: each AI fix changes code the next fix doesn't expect, and the codebase decays faster than the model can repair it. CodeRabbit's analysis of 470 pull requests found AI-generated code carries 1.7x more issues than human code.

Most AI builders handle this the same way: paste the stack trace back into the model and ask it to fix it. The model changes five things at once, some unrelated. The error moves but doesn't go away. Each retry drifts the code further from working.

The problem isn't the model. Vercel showed this when v0's AutoFix pipeline pushed their error-free rate from 62% to 93%, not by switching models but by adding structure. The model was always capable. It just needed a process.

How Appifex self-heals

Greg Pstrucha's Shift More Left makes the key point: the cheapest bug to fix is the one you catch before the app runs. A missing import caught by static analysis costs nothing. The same bug caught by a failing endpoint test costs a server boot, HTTP probes, and an LLM fix attempt.

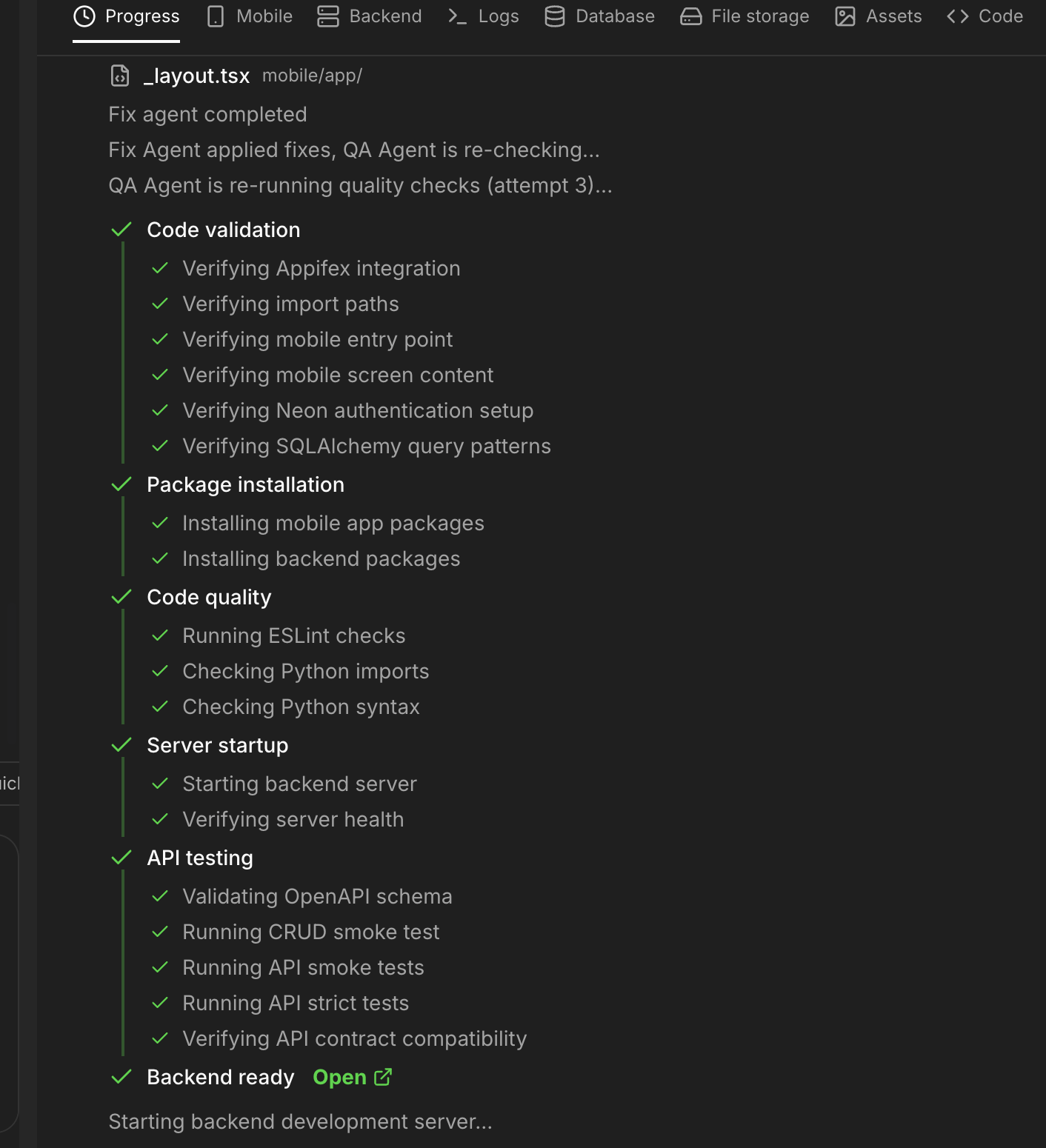

Appifex runs a six-stage QA pipeline after every code generation, cheapest checks first: code validation, package installation, code quality, server startup, and API testing. Each stage is a gate. If packages don't install, there's no point booting the server. If the server won't start, there's no point testing endpoints. When something fails, a fix agent diagnoses the root cause and patches the code, then the pipeline reruns. This catches 95% of issues before you ever see them.

The pipeline doesn't just check if it compiles. It starts the actual server inside a cloud sandbox — a personal computer we give every user for free — hits real endpoints, and verifies that the frontend API calls match backend routes. Known failure patterns get fixed deterministically without even invoking an LLM. And a circuit breaker stops the loop if the same error keeps repeating, so we're never burning tokens going in circles.

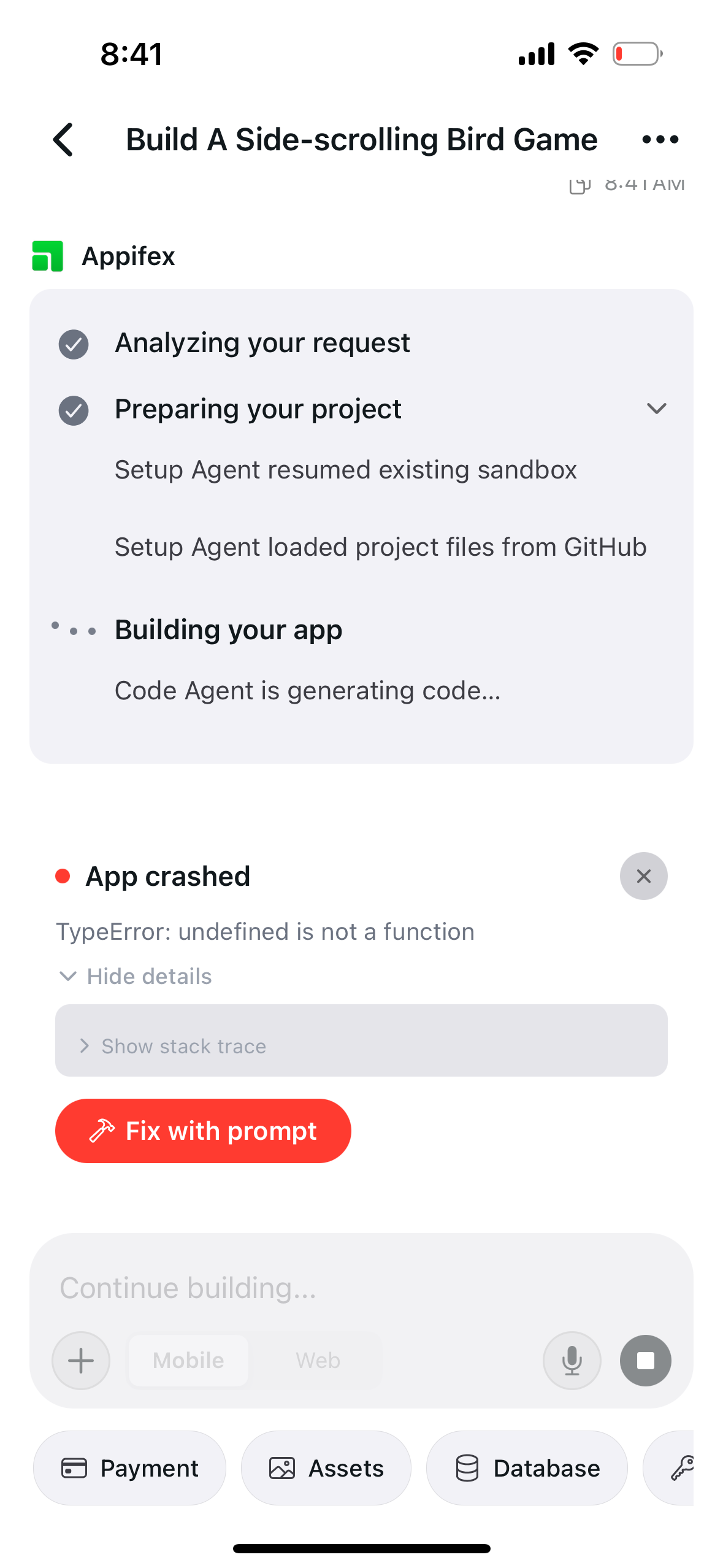

When something slips through

Across thousands of apps built on Appifex in the last month, the pipeline resolves 95% of issues automatically. For the remaining 5% that surface at runtime, Appifex detects the error automatically, shows you exactly what happened, and lets you confirm a fix with one tap.

We could automate this step too. We chose not to. Runtime behavior is where your product decisions live — how error states look to users, what happens on edge cases, whether a failure should retry or show a message. You should be in the loop on product decisions. You shouldn't be in the loop on missing imports.

Your time back where it matters

The pattern across AI tooling is clear: the tool handles the generation, then hands you the debugging. Your time shifts from building to babysitting. Forbes reported on AI burnout as an emerging workplace problem — the freed-up hours don't stay freed, they fill with new tedium.

Self-healing inverts this. The pipeline absorbs the tedious infrastructure and debugging work. Runtime errors surface with enough context to fix in one click. Your time goes to the things only you can decide: what to build, who it's for, when to pivot. The stuff that actually matters.

The AI was always fast at writing code. The missing piece was a system that takes responsibility for making it work.

To see how the architecture behind the QA pipeline is structured, read production-grade AI code. For the full infrastructure story, see how we rebuilt Google and Meta's developer tools. Ready to try it? Start building for free.