From Google and Meta to a Free AI App Builder

The developer infrastructure at Google and Facebook is a decade ahead of anything outside. I'm not exaggerating. Ex-Googlers maintain a lookup table just to map internal tools to external equivalents, because the gap is that wide. Steve Yegge, who worked at both Google and Amazon, put it plainly: "Nobody had done dev tools right, and only Google has gotten close."

When I left, it felt like going from a sports car to a bicycle.

So I rebuilt it. The parts that matter for app development, anyway. And I made it free.

Cloud dev environments

Google has CitC (Clients in the Cloud), a FUSE filesystem that presents the entire 2-billion-line monorepo to every engineer but only downloads the files they actually modify. The average workspace stores fewer than 10 files locally. Engineers switch machines and pick up instantly. Your laptop is a thin client. Many Google engineers use Chromebooks as their primary machine. That's how good the cloud infra is.

Meta has OnDemand, isolated dev servers where you test changes in a production-like environment before they even hit code review. Meta is also more generous with hardware. When I was there, you could grab $300 Bose headphones, the latest iPhone, chargers, upgrade your Mac, anytime, no questions asked. Mobile engineers each had a laptop AND an $8,000 Mac Pro on their desk just for builds.

But the philosophy at both companies is the same: everything runs in the cloud, your local machine is just a window into it.

Appifex gives every user the same model. Every project gets its own cloud sandbox. Nothing to install. Close your browser, come back in a week, it's still there. Want to build an iOS app but don't have a Mac? Talk to your phone and we handle the cloud build, code signing, and App Store submission. Your phone is the thin client now.

Mobile

This is where Appifex goes further than what either company offers individual engineers.

Meta invested heavily in server-driven mobile UIs. The idea: write PHP on the server, render native components on the client. Every engineer is full-stack, everyone ships impact fast. It works for Meta's culture. But mobile performance takes a hit. If you've ever felt Instagram getting janky, now you know why.

React Native is used for greenfield projects and smaller product surfaces. Instagram, Ads Manager, and dozens of internal tools run on it.

Google owns Android and built Flutter. But Flutter runs on Dart, a language with limited industry adoption outside Google. It's losing momentum. Google's new push is Kotlin Multiplatform, but adoption is early.

Appifex generates real React Native apps using the same framework Meta uses. Cross-platform. Over-the-air updates. Preview on your real phone in seconds by scanning a QR code. No Xcode. No Android Studio. No simulator.

Native SwiftUI support is launching soon for high-performance iOS apps, games, and Vision Pro experiences. Kotlin Multiplatform is in research.

Most AI app builders give you a PWA and call it mobile. A website with an app icon can't do camera, haptics, biometrics, 60fps animations, offline SQLite, or push notifications. Users feel the difference immediately. We wrote more about this in our post on the mobile app gap.

Source control and code review

Google has Piper + Critique. They call it a CL (changelist). Critique has full code intelligence embedded in the review UI: jump-to-definition across 2 billion lines, Tricorder analysis results surfaced inline with one-click "Please fix" buttons, and automated build and test runs before any human reviews the code.

Meta has Mercurial + Phabricator. They call it a diff. Stacked diffs instead of branches, automated code owner routing via Herald, and Sandcastle CI results posted on every diff. Jackson Gabbard wrote the canonical essay on why this workflow is superior to pull requests: with PRs, the easiest behavior is "shoehorning in a bunch of shit under one PR because it's just so much work to get code out for review." Meta's tooling makes small, focused changes the path of least resistance.

The outside world calls it a PR. And most of us are still copy-pasting CI logs.

Appifex auto-creates a GitHub repo for every project. Each generation session is a branch. Push from GitHub and Appifex sees it. Generate from Appifex and GitHub has the commit. An AI agent reviews your PRs and patches issues. Merge conflicts? Tag @appifex-ai on the PR and an AI agent resolves them automatically. We covered the full code ownership model in a separate post.

Static analysis and QA

Google's Tricorder is a static analysis platform that processes 50,000 code reviews per day across 30+ languages with 100+ analyzers. The key design constraint: a false positive rate below 5%. That's what it takes for engineers to actually trust and act on the results. Tricorder catches your bug, suggests a fix, and puts a "Please fix" button in the review before a human even looks at the code.

Meta built an entire programming language to solve this problem. Hack has a type checker that runs on every keystroke, providing real-time AST analysis across the codebase. Infer does interprocedural static analysis for memory leaks and thread safety. Sandcastle runs the full test suite on every diff. Nothing lands without passing.

Appifex runs a 6-stage QA pipeline after every code generation, ordered cheapest checks first:

Code pattern analysis, dependency checks, linting, build verification, server startup, and live API endpoint testing. Each stage is a gate. If packages don't install, there's no point booting the server. 95% of issues are resolved before you ever see them. You just see "Ready to preview." More detail on this pipeline in our self-healing agents post.

Self-healing infrastructure

Google has Borg, the internal cluster management system that predates Kubernetes by over a decade. A job dies? Borg restarts it on another machine before anyone notices. Health checks, automatic rescheduling, priority preemption. Infrastructure that heals itself.

Meta has Conveyor, achieving 100,000 deploys per week with 97% fully automated and zero manual intervention. Something breaks in production? Automatic rollback. No one gets paged.

Appifex takes a different approach because the problem is different. Instead of healing running services, we heal generated code. The AI agent doesn't guess from a pasted stack trace the way you'd use ChatGPT. It lives inside the cloud sandbox.

Package install fails? The agent sees the exact error from the real process. Server won't start? It reads the actual stack trace. API endpoint returns 500? It hits the endpoint, reads the response, and patches the code. It's a closed feedback loop: generate, run, observe, fix. Up to 5 cycles, with a circuit breaker that stops the loop if the same error keeps repeating. The agent isn't debugging from screenshots. It's operating inside the environment.

Deploys

Google has Rapid, pushing to production in minutes with automatic canary analysis. Bad metrics? Auto-rollback before users notice.

Meta does 100,000 deploys per week. Every deploy rolls through staged gates: intern, c1, c2, c3, each expanding to a larger percentage of production traffic. If metrics degrade at any stage, it stops. No human in the loop. In 2017, Facebook switched to deploying directly from master to 100% of production web servers, pushing tens to hundreds of diffs every few hours.

Appifex collapses this into one click. Hit publish and the system auto-provisions your infrastructure, runs database migrations, signs your iOS binary, submits to TestFlight, and pushes to the Play Store. Every step is a durable checkpoint. If the process crashes mid-deploy, it picks up exactly where it left off.

You describe an app on your couch. It's in the App Store under your name.

Database isolation

Google has Spanner, globally distributed and ACID-compliant, using atomic clocks for transaction ordering. Every team gets isolated namespaces. Dev never touches prod.

Meta runs a massive MySQL fleet with schema isolation per service. Developers test against sandboxed database instances. You can't accidentally corrupt production data because the infrastructure won't let you.

Appifex uses Neon's database branching. Every session gets its own isolated database branch. Development is completely separated from production. You never write a migration file. You never touch a connection string. Your users' production data is never at risk during development.

Break things freely. Production doesn't care.

Fullstack means having an actual backend

Most AI app builders generate a React frontend, wire it to Supabase, and call it fullstack. Your business logic lives in RPC calls. Your auth is a third-party widget. Your database is someone else's Postgres with a REST API on top. You don't own any of it.

At Google and Meta, every product has real backend services. Custom API routes. Business logic that runs on infrastructure you control. Real products need real backends.

Appifex generates a real FastAPI backend with proper API routes, SQLAlchemy models, Alembic migrations, and auth built in. The frontend talks to your API, not Supabase's. You own the code. It's in your GitHub repo. Deploy it to Vercel, Railway, or your own server.

Code ownership

Base44 locks GitHub integration behind their $40/month Builder plan, and even then only frontend code exports. Backend logic stays on their servers permanently. Other builders paywall code access or restrict it to higher tiers. Stop paying and you lose access to what you built.

At Google and Meta, every engineer has full access to the entire codebase. Billions of lines. No paywall. No permission needed. Code is a shared asset, not a hostage.

Appifex puts your code in a GitHub repo from minute one. Standard React, FastAPI, and React Native. No proprietary SDK. No @appifex/runtime. Run npm install && npm run dev for the frontend, pip install -r requirements.txt && python -m uvicorn app.main:app (or Poetry, or uv — your call) for the backend, and it all works locally. Hand it to a contractor who's never heard of Appifex. It just works. We covered the full ownership model and the lock-in playbook in a dedicated post.

Stop paying us and nothing breaks. Your code is yours. That's not a feature, that's a baseline.

Observability

Google has Monarch, a planet-scale monitoring system that stores close to a petabyte of compressed time series data in memory, and Dapper, the distributed tracing system that inspired OpenTelemetry. Older tools, harder to use, but battle-tested at a scale nothing else touches.

Meta built Scuba, a real-time analytics system that ingests millions of rows per second, entirely in memory, with sub-second query latency. Plus internal experimentation infrastructure (Deltoid + QuickExperiment) that Statsig's founder, Vijaye Raji, took and rebuilt for the outside world. Now part of OpenAI's stack.

Appifex is integrating Sentry for error tracking, Datadog for metrics, and Statsig for feature flags into generated apps. Some of this is live, some is coming. Our own backend already runs the full stack. I'm working to give it to every app we generate.

The honest truth

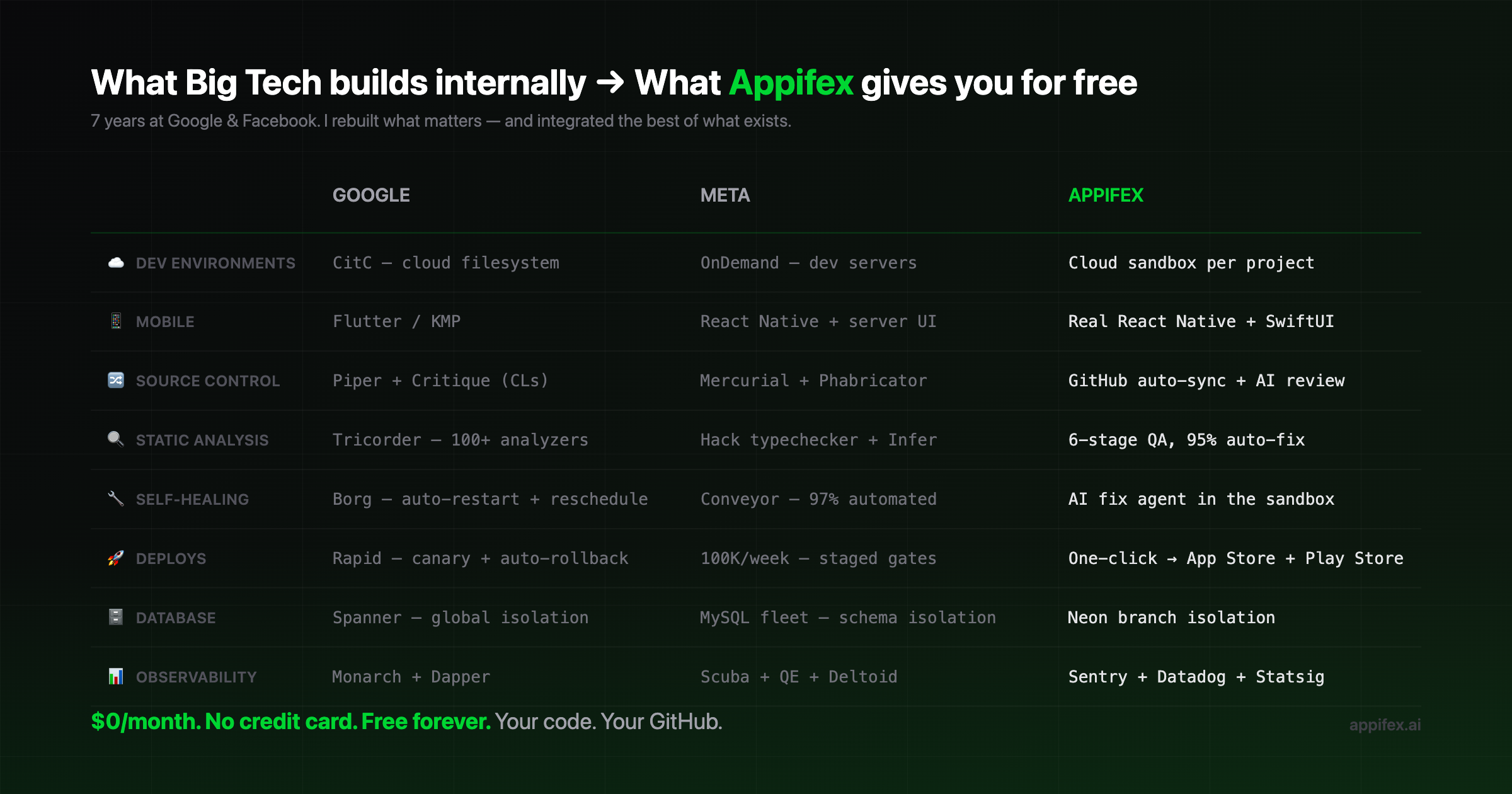

| Category | Meta | Appifex | |

|---|---|---|---|

| Dev environments | CitC | OnDemand | Cloud sandbox per project |

| Mobile | Flutter / KMP | React Native + server-driven UI | Real React Native + SwiftUI soon |

| Source control | Piper + Critique (CLs) | Mercurial + Phabricator (diffs) | GitHub auto-sync + AI PR review |

| Static analysis | Tricorder | Hack type checker + Infer + Sandcastle | 6-stage QA pipeline, 95% auto-fix |

| Self-healing | Borg | Conveyor (97% automated) | AI fix agent inside the sandbox |

| Deploys | Rapid (canary + rollback) | 100K/week, staged gates | One-click to App Store + Play Store |

| Database | Spanner | MySQL fleet (schema isolation) | Neon branch isolation |

| Observability | Monarch + Dapper | Scuba + QE + Deltoid (→ Statsig) | Sentry + Datadog + Statsig |

Meta spent years building Tupperware, MobileConfig, Sandcastle, and Buck2. Google built Borg, Spanner, Monarch, and Blaze. Billions of dollars. Thousands of engineers.

I took what mattered for app development and rebuilt it.

Why not just be a dev environment?

People ask if Appifex is more like Replit or GitHub Codespaces. Neither. Codespaces gives you a container and says "good luck." Replit has evolved into an impressive AI app builder with Agent, built-in database, auth, payments, and hosting — but it supports 50+ languages across web, mobile, and everything in between. Breadth is their bet. Depth is ours.

Appifex is a product builder. We pick a stack — React, FastAPI, React Native — and support it at production grade, end to end. Cloud sandbox, AI generation, QA pipeline, self-healing agent, database branching, one-click deploy. Every layer is purpose-built for that stack.

This is a deliberate constraint, and it's the same one Big Tech makes internally. Meta's entire web layer runs on Hack/PHP. Google standardizes on a handful of approved languages. Even companies with tens of thousands of engineers don't let teams pick whatever language they want — because every stack you add means another build system, another deploy pipeline, another set of QA tooling to maintain. Focus is what makes infrastructure excellent instead of merely adequate. Supporting a stack properly means owning the entire lifecycle: how code is generated, how dependencies resolve, how the build runs, how errors are caught and fixed, how the app deploys, how the database migrates. Do that well for one stack and you get the quality bar that Google and Meta hold internally. Spread thin across every language and you get a fancy terminal.

When there's a real business case, we'll add another stack. JVM is an obvious candidate — many enterprises already run Java or Kotlin for compliance, ecosystem, and organizational reasons. But when we add it, we'll support the entire JVM lifecycle the same way we support Python and TypeScript today. Build tooling, dependency management, QA gates, deploy targets, database patterns. All of it. We don't take that decision lightly because doing it halfway would betray the mission.

The mission is production-grade software for everyone. Not "access to a terminal for everyone." There's a difference.

Appifex Free gives you 1,000 credits every month. No credit card. No paywall. The infrastructure is included.

I'd honestly rather be shipping features than writing this post. But the best infrastructure in the world doesn't matter if nobody knows it exists. That's the game. I hate playing it. But I'm playing it so you don't have to play the infrastructure game.

Try it. Build something. Tell me what's missing. That's all I ask.